APEX Lab

Algorithmically Principled Exploration (APEX)

explore broadly, venture boldly

Latest Publications

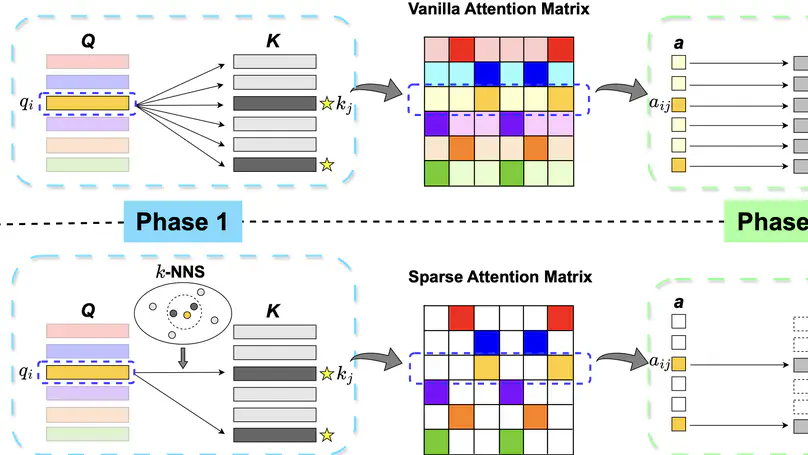

One limitation of existing Transformer-based models is that they cannot handle very long sequences as input since their self-attention operations exhibit quadratic time and space complexity. This problem becomes especially acute when Transformers are deployed on hardware platforms equipped only with CPUs. To address this issue, we propose a novel method for accelerating self-attention at inference time that works with pretrained Transformer models out-of-the-box without requiring retraining. We experiment using our method to accelerate various long-sequence Transformers, including a leading LLaMA 2-based LLM, on various benchmarks and demonstrate a greater speedup of 2.73x - 7.63x while retaining 98.6% - 99.6% of the accuracy of the original pretrained models. The code is available on our project website at https://yuzhenmao.github.io/IceFormer/.

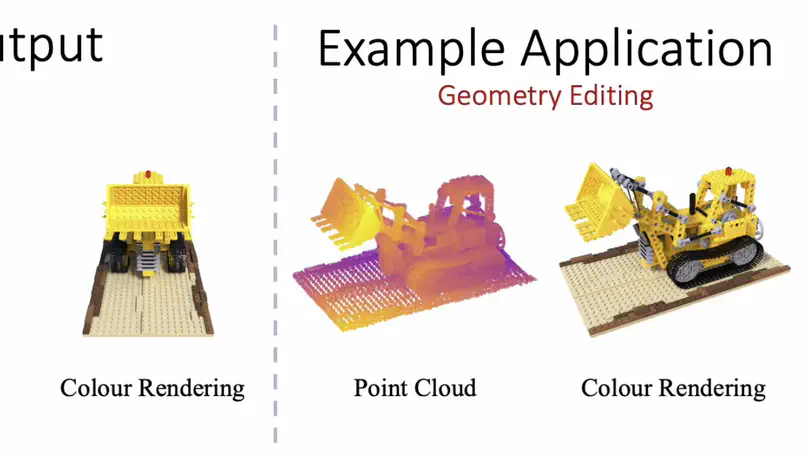

Given a set of images from different views and their corresponding camera poses, PAPR learns a point-based surface representation of the scene and a rendering pipeline from scratch. Additionally, PAPR enables practical applications such as geometry editing, object manipulation, texture transfer, and exposure control.

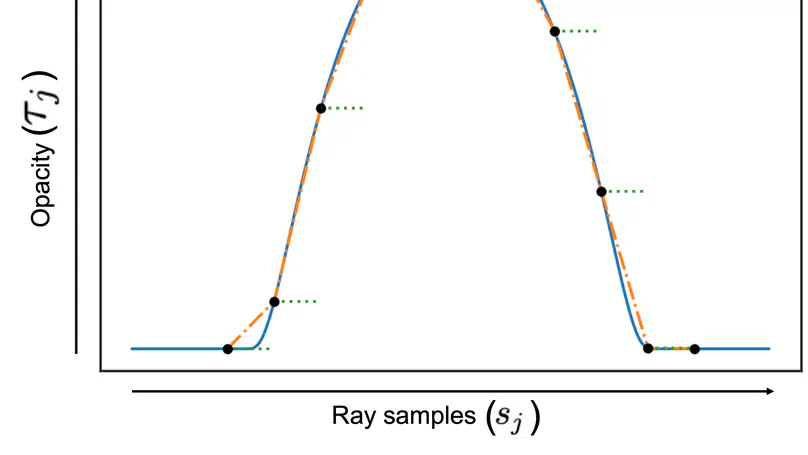

We revisit the quadrature used to approximate volume rendering in NeRF and devise a different quadrature formula based on a different approximation to the opacity. We derive the rendering equation under piecewise linear opacity and show that it both resolves quadrature instability and has good numerical conditioning.